» A summary/guide, how i render Karma jobs on Deadline and Husk – Directly from Houdini USD-ROPs in Solaris. «

The Standart Deadline Submitter/Implementation uses Hython to start Houdini render jobs. BTW, im using Deadline Version 10.1.18.4

Why Husk not Hython?

Like Husk, Hython is a Command line tool as well. Its a kind of a Shell wrapper, so its a Houdini instance without a GUI – controllable via Python. Therefore the startup and loading time of scenes/.hip files, cooking, etc. is a important factor. Another downside is, it needs a complete Houdini License.

Husk uses just a Renderlicense for Karma. Here on my Houdini FX version i can utilize 5 Karma Renderer out of the box. (Indie has 1 Renderlicense for Karma)

The Rendernodes just need a proper Houdini installation and Karma License applied in the License Manager.

Husk is for rendering USD files (and any kind of Hydra Renderdelegates). So you have to export your USD render files manually, or build an extra export job on Deadline. (no topic of this blog post)

To build a custom export and submitter script for Houdini we need some scripts and modifications on the Deadline Repositiory.

Lets start.

First, we take look at the Houdini Docs:

https://www.sidefx.com/docs/houdini/ref/utils/husk.html

Here you can find all commands to utilize Husk or to modify your Deadline scripts for your needs. We need that later when we will modificate some of our Python scripts.

About Deadline jobs:

There are different “Types” of jobs you can send to Deadline (Houdini, Nuke, Fusion, etc.). To send a proper “CallDeadlineCommand” via Python you just need two things.

A jobInfoFile & pluginInfoFile which are just dictonaries of data.

The jobInfoFile for instance, contains infos about the plugin, jobname, framerange, Renderpool etc. The pluginInfoFile contains the scene file location and more.

Here some links for Commands and Job Submission in Deadline:

Deadline has no proper plugin or GUI entries in the Deadline Monitor to render via Husk Cmd. There are several plugins you can find on Github. I am using a modificated version from here:

https://github.com/DavidTree/HuskStandaloneSubmitter

Follow the installation steps and copy the files to your Deadline Repository. You not really need the Submission script which allows you to add Husk jobs at the Deadline Monitor . Its nice to have.

Is every file in place you get an “HuskStandalone” entry in Deadline Monitor>Tools>Configure Plugins…

Set a proper path to your Husk binary. In the screenshot i have <ns_version> placeholder instead of a right Houdini folder. Its because i will replace that string later dynamically in the Python script with the proper Houdini version.

You can ignore that and just add a path like (example Windows):

C:\Program Files\Side Effects Software\Houdini 19.5.435\bin\husk.exe

Set a nice Plugin Icon as well.

Edit some Python files – Deadline Repository.

First checking if the standart Deadline Submitter Houdini is working and installed. Some Python sys.path has been setted correctly. This command in the Houdini Python Shell SHOULDNT throw an error:

from CallDeadlineCommand import CallDeadlineCommandThis is important, because we add an extra function in here:

*\DeadlineRepository10\submission\Houdini\Main\SubmitHoudiniToDeadlineFunctions.py

def SubmitRenderJob_husk( node, jobProperties, dependencies ):

if jobProperties.get("usdjob"):

if jobProperties.get("usdjob") == 1:

assemblyJobIds = []

jobName = jobProperties.get( "jobname", "Untitled" )

jobName = "%s - %s"%(jobName, node.path())

subInfo = json.loads( hou.getenv("Deadline_Submission_Info") )

homeDir = subInfo["UserHomeDir"]

jobInfoFile = os.path.join(homeDir, "temp", "houdini_submit_info.job")

## job file ##

with open( jobInfoFile, "w" ) as fileHandle:

fileHandle.write( "Plugin=HuskStandalone\n" )

fileHandle.write( "Name=%s\n" % jobName )

fileHandle.write( "Comment=%s\n" % jobProperties.get( "comment", "" ) )

fileHandle.write( "Department=%s\n" % jobProperties.get( "department", "" ) )

fileHandle.write( "Pool=%s\n" % jobProperties.get( "pool", "None" ) )

fileHandle.write( "SecondaryPool=%s\n" % jobProperties.get( "secondarypool", "" ) )

fileHandle.write( "Group=%s\n" % jobProperties.get( "group", "None" ) )

fileHandle.write( "Priority=%s\n" % jobProperties.get( "priority", 50 ) )

fileHandle.write( "TaskTimeoutMinutes=%s\n" % jobProperties.get( "tasktimeout", 0 ) )

fileHandle.write( "EnableAutoTimeout=%s\n" % jobProperties.get( "autotimeout", False ) )

fileHandle.write( "ConcurrentTasks=%s\n" % jobProperties.get( "concurrent", 1 ) )

fileHandle.write( "MachineLimit=%s\n" % jobProperties.get( "machinelimit", 0 ) )

fileHandle.write( "LimitConcurrentTasksToNumberOfCpus=%s\n" % jobProperties.get( "slavelimit", False ) )

fileHandle.write( "LimitGroups=%s\n" % jobProperties.get( "limits", 0 ) )

fileHandle.write( "JobDependencies=%s\n" % dependencies )

fileHandle.write( "OnJobComplete=%s\n" % jobProperties.get( "onjobcomplete", "Nothing" ) )

fileHandle.write( "Frames=%s\n" % GetFrameList( node, jobProperties) )

fileHandle.write( "ChunkSize=%s\n" % jobProperties.get( "framespertask", 1 ) )

pluginInfoFile = os.path.join( homeDir, "temp", "houdini_plugin_info.job")

with open( pluginInfoFile, "w" ) as fileHandle:

fileHandle.write( "SceneFile=%s\n" % hou.parm(node.path() + "/lopoutput").eval() )

fileHandle.write( "LogLevel=%s\n" % jobProperties.get( "usdloglevel", 2 ) )

fileHandle.write( "HouVersion=%s\n" % jobProperties.get( "ns_pipe_hou_version", "Nothing" ) )

fileHandle.write( "OutImage=%s\n" % jobProperties.get( "ns_pipe_image_out", "" ) )

arguments = [ jobInfoFile, pluginInfoFile ]

jobResult = CallDeadlineCommand( arguments )

jobId = GetJobIdFromSubmission( jobResult )

assemblyJobIds.append( jobId )

print("---------------------------------------------------")

print("\n".join( [ line.strip() for line in jobResult.split("\n") if line.strip() ] ) )

print("---------------------------------------------------")

else:

return

else:

print("ns_Pipe> Found no usdjob property")This creates the necessary jobInfoFile & pluginInfoFile . Here are some dictionary (jobProperties) values that are custom made and created when i trigger the Houdini Submitter script, we will see later.

Now open the custom HuskStandalone.py file:

*\DeadlineRepository10\custom\plugins\HuskStandalone\HuskStandalone.py

Here will be the arguments comped that will be sended to Husk per Deadline Task.

#!/usr/bin/env python3

from System import *

from System.Diagnostics import *

from System.IO import *

import os

from Deadline.Plugins import *

from Deadline.Scripting import *

from pathlib import Path

def GetDeadlinePlugin():

return HuskStandalone()

def CleanupDeadlinePlugin(deadlinePlugin):

deadlinePlugin.Cleanup()

class HuskStandalone(DeadlinePlugin):

# functions inside a class must be indented in python - DT

def __init__( self ):

self.InitializeProcessCallback += self.InitializeProcess

self.RenderExecutableCallback += self.RenderExecutable # get the renderExecutable Location

self.RenderArgumentCallback += self.RenderArgument # get the arguments to go after the EXE

def Cleanup( self ):

del self.InitializeProcessCallback

del self.RenderExecutableCallback

del self.RenderArgumentCallback

def InitializeProcess( self ):

self.SingleFramesOnly=True

self.StdoutHandling=True

self.PopupHandling=False

self.AddStdoutHandlerCallback("USD ERROR(.*)").HandleCallback += self.HandleStdoutError # detect this error

self.AddStdoutHandlerCallback( r"ALF_PROGRESS ([0-9]+(?=%))" ).HandleCallback += self.HandleStdoutProgress

# get path to the executable

def RenderExecutable(self):

## the replace fills in the correct Houdini_Version folder ##

return self.GetConfigEntry( "USD_RenderExecutable" ).replace("<ns_version>", "Houdini " + self.GetPluginInfoEntry("HouVersion"))

# get the settings that go after the filename in the render command, 3Delight only has simple options.

def RenderArgument( self ):

# construct fileName

# this will only support 1 frame per task

usdFile = self.GetPluginInfoEntry("SceneFile")

usdFile = RepositoryUtils.CheckPathMapping( usdFile )

usdFile = usdFile.replace( "\\", "/" )

usdPaddingLength = FrameUtils.GetPaddingSizeFromFilename( usdFile )

frameNumber = self.GetStartFrame() # check this 2021 USD

argument = ""

argument += usdFile + " "

argument += "--verbose a{} ".format(self.GetPluginInfoEntry("LogLevel")) # alfred style output and full verbosity

argument += "--frame {} ".format(frameNumber)

argument += "--frame-count 1" + " " #only render 1 frame per task

#renderer handled in job file.

## Custom render output destination ##

if self.GetPluginInfoEntry("OutImage") != "":

output_path = os.path.dirname(self.GetPluginInfoEntry("OutImage"))

out_image_path_parts = self.GetPluginInfoEntry("OutImage").split("/")

image_comp_parts = out_image_path_parts[-1].split(".")

image_name = image_comp_parts[0]

padded_frame_number = StringUtils.ToZeroPaddedString(frameNumber, len(image_comp_parts[-2]))

image_format = image_comp_parts[-1]

argument += "-o {0}/{1}.{2}.{3}".format(output_path, image_name, padded_frame_number, image_format)

else:

## Fallback ##

outputPath = os.path.dirname(usdFile).split('/') #[:-4] We are now going to site the composite USD in the project root.

outputPath.append("render")

outputPath = os.path.abspath(os.path.join(*outputPath))

if not os.path.isdir(outputPath):

os.mkdir(outputPath)

filename = Path(usdFile).name

filename = Path(filename).with_suffix("")

paddedFrameNumber = StringUtils.ToZeroPaddedString(frameNumber, 4)

argument += "-o {0}/{1}.{2}.exr".format(outputPath, filename, paddedFrameNumber)

argument += " --make-output-path"

argument += " --exrmode 0" ## Legacy exr mode for fusion cryptomattes ##

self.LogInfo( "Rendering USD file: " + usdFile )

return argument

# just incase we want to implement progress at some point

def HandleStdoutProgress(self):

self.SetStatusMessage(self.GetRegexMatch(0))

self.SetProgress(float(self.GetRegexMatch(1)))

# what to do when an error is detected.

def HandleStdoutError(self):

self.FailRender(self.GetRegexMatch(0))

Here i added some code in the RenderExecutable and RenderArgument function. Just compare it with the original script.

I added a new argument for Husk as well:

argument += " --exrmode 0"This is for Fusion Cryptomattes .exr files. Otherwise the Cryptos wont working.

NOTE:

Check out other arguments to pass through here:

https://www.sidefx.com/docs/houdini/ref/utils/husk.html

For example to set OCIO color transform with this line:

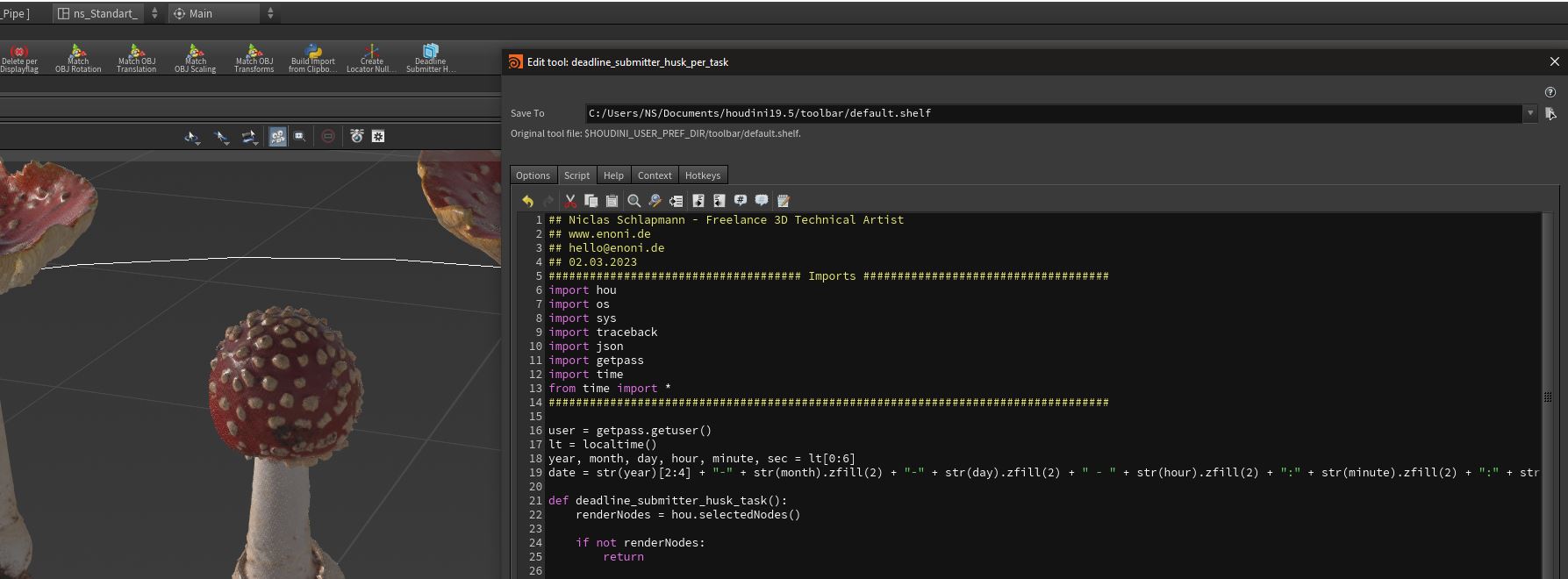

argument += " --ocio 1"Create a custom Houdini Submitter Script for USD-ROPs.

You can just create a Houdini shelf tool or build an entry in the OPmenu.xml to trigger the script.

This will create a script, that has an input prompt where you can define how many frames Deadline will render per task. You can, of course, build a high advanced submitter with a lot more inputs, choices and a fancy GUI.

Here for Octane you have to create a “husk” pool on Deadline:

## Niclas Schlapmann - Freelance 3D Technical Artist

## www.enoni.de

## hello@enoni.de

## 02.03.2023

##################################### Imports ####################################

import hou

import os

import sys

import traceback

import json

import getpass

import time

from time import *

##################################################################################

user = getpass.getuser()

lt = localtime()

year, month, day, hour, minute, sec = lt[0:6]

date = str(year)[2:4] + "-" + str(month).zfill(2) + "-" + str(day).zfill(2) + " - " + str(hour).zfill(2) + ":" + str(minute).zfill(2) + ":" + str(sec).zfill(2)

def deadline_submitter_husk_task():

renderNodes = hou.selectedNodes()

if not renderNodes:

return

if renderNodes[0].type().name() not in ["usdrender_rop", "usd_rop"]:

hou.ui.displayMessage("Select a proper USD-ROP or USDRender-ROP")

return

for renderNode in renderNodes:

jobname = hou.getenv("HIPNAME")

pool = "husk"

secondarypool = "husk"

comment = "submitted by <" + user + "> " + date

department = "enoni.de"

if renderNode.evalParm(renderNode.path() + "/trange") >= 1:

framelist = str(int(renderNode.evalParm(renderNode.path() + "/f1"))) + "-" + str(int(renderNode.evalParm(renderNode.path() + "/f2")))

else:

framelist = str(int(hou.frame())) + "-" + str(int(hou.frame()))

framecount = int(renderNode.evalParm(renderNode.path() + "/f2")) - int(renderNode.evalParm(renderNode.path() + "/f1")) + 1

frame_input_count = hou.ui.readInput("Frames per task:", buttons=("OK", "Cancel"), initial_contents=str(framecount))

if frame_input_count[0] == 1:

return

## create prop dictionary ##

jobProperties = {

'batch': False,

'jobname': jobname,

'comment': comment,

'department': department,

'pool': pool,

'secondarypool': secondarypool,

'group': 'none',

'priority': 99,

'tasktimeout': 0,

'autotimeout': 0,

'concurrent': 1,

'machinelimit': 0,

'slavelimit': 1,

'limits': '',

'onjobcomplete': 'Nothing',

'jobsuspended': 0,

'shouldprecache': 1,

'isblacklist': 0,

'machinelist': '',

'overrideframes': 1,

'framelist': framelist,

'framespertask': int(frame_input_count[1]),

'bits': '64bit',

'submitscene': 0,

'isframedependent': 0,

'gpuopenclenable': 0,

'gpuspertask': 0,

'gpudevices': '',

'ignoreinputs': 0,

'separateWedgeJobs': 0,

'mantrajob': 0,

'mantrapool': pool,

'mantrasecondarypool': secondarypool,

'mantragroup': 'none',

'mantrapriority': 50,

'mantratasktimeout': 0,

'mantraautotimeout': 0,

'mantraconcurrent': 1,

'mantramachinelimit': 0,

'mantraslavelimit': 1,

'mantralimits': '',

'mantraonjobcomplete': 'Nothing',

'mantraisblacklist': 0,

'mantramachinelist': '',

'mantrathreads': 0,

'mantralocalexport': 0,

'arnoldjob': 1,

'arnoldpool': pool,

'arnoldsecondarypool': secondarypool,

'arnoldgroup': 'none',

'arnoldpriority': 50,

'arnoldtasktimeout': 0,

'arnoldautotimeout': 0,

'arnoldconcurrent': 1,

'arnoldmachinelimit': 0,

'arnoldslavelimit': 1,

'arnoldonjobcomplete': 'Nothing',

'arnoldlimits': '',

'arnoldisblacklist': 0,

'arnoldmachinelist': '',

'arnoldthreads': 0,

'arnoldlocalexport': 1,

'rendermanjob': 0,

'rendermanpool': pool,

'rendermansecondarypool': secondarypool,

'rendermangroup': 'none',

'rendermanpriority': 50,

'rendermantasktimeout': 0,

'rendermanconcurrent': 1,

'rendermanmachinelimit': 0,

'rendermanlimits': '',

'rendermanonjobcomplete': 'Nothing',

'rendermanisblacklist': 0,

'rendermanmachinelist': '',

'rendermanthreads': 0,

'rendermanarguments': '',

'rendermanlocalexport': 0,

'redshiftjob': 0,

'redshiftpool': pool,

'redshiftsecondarypool': secondarypool,

'redshiftgroup': 'none',

'redshiftpriority': 50,

'redshifttasktimeout': 0,

'redshiftautotimeout': 0,

'redshiftconcurrent': 1,

'redshiftmachinelimit': 0,

'redshiftslavelimit': 1,

'redshiftlimits': '',

'redshiftonjobcomplete': 'Nothing',

'redshiftisblacklist': 0,

'redshiftmachinelist': '',

'redshiftarguments': '',

'redshiftlocalexport': 0,

'usdjob': 1,

'usdpool': pool,

'usdsecondarypool': secondarypool,

'usdgroup': 'none',

'usdpriority': 50,

'usdtasktimeout': 0,

'usdautotimeout': 0,

'usdconcurrent': 1,

'usdmachinelimit': 0,

'usdslavelimit': 1,

'usdlimits': '',

'usdonjobcomplete': 'Nothing',

'usdisblacklist': 0,

'usdmachinelist': '',

'usdarguments': '',

'usdlocalexport': 1,

'usdloglevel': 2,

'vrayjob': 0,

'vraypool': pool,

'vraysecondarypool': secondarypool,

'vraygroup': 'none',

'vraypriority': 50,

'vraytasktimeout': 0,

'vrayautotimeout': 0,

'vrayconcurrent': 1,

'vraymachinelimit': 0,

'vrayslavelimit': 1,

'vraylimits': '',

'vrayonjobcomplete': 'Nothing',

'vrayisblacklist': 0,

'vraymachinelist': '',

'vraythreads': 0,

'vrayarguments': '',

'vraylocalexport': 0,

'tilesenabled': 0,

'tilesinx': 3,

'tilesiny': 3,

'tilessingleframeenabled': 1,

'tilessingleframe': 1,

'jigsawenabled': 1,

'jigsawregioncount': 0,

'jigsawregions': [],

'submitdependentassembly': 1,

'backgroundoption': 'Blank Image',

'backgroundimage': '',

'erroronmissingtiles': '1',

'erroronmissingbackground': '0',

'cleanuptiles': '1'

}

## write USD from USD-ROP ##

usd_file_path = renderNode.evalParm(renderNode.path() + "/lopoutput")

if os.path.isfile(usd_file_path):

if hou.ui.displayMessage("USD render file already exist. Override", buttons=("Yes", "Abort")) == 0:

renderNode.parm("execute").pressButton()

else:

return

else:

renderNode.parm("execute").pressButton()

## Submit to Deadline ##

flag = 0

## imports and sys pathes for deadline ##

try:

from CallDeadlineCommand import CallDeadlineCommand

except ImportError:

path = ""

print("The CallDeadlineCommand.py script could not be found in the Houdini installation. Please make sure that the Deadline Client has been installed on this machine.\n")

hou.ui.displayMessage("The CallDeadlineCommand.py script could not be found in the Houdini installation. Please make sure that the Deadline Client has been installed on this machine.", title="Submit Houdini To Deadline")

else:

path = CallDeadlineCommand(["-GetRepositoryPath", "submission/Houdini/Main"]).strip()

if path:

path = path.replace("\\", "/")

# Add the path to the system path

if path not in sys.path:

print("Appending \"" + path + "\" to system path to import SubmitHoudiniToDeadline module")

sys.path.append(path)

else:

pass

# Import the script and call the main() function

try:

import SubmitHoudiniToDeadline

except:

print(traceback.format_exc())

print("The SubmitHoudiniToDeadline.py script could not be found in the Deadline Repository. Please make sure that the Deadline Client has been installed on this machine, that the Deadline Client bin folder is set in the DEADLINE_PATH environment variable, and that the Deadline Client has been configured to point to a valid Repository.")

else:

print("The SubmitHoudiniToDeadline.py script could not be found in the Deadline Repository. Please make sure that the Deadline Client has been installed on this machine, that the Deadline Client bin folder is set in the DEADLINE_PATH environment variable, and that the Deadline Client has been configured to point to a valid Repository.")

## Get Deadline Info ##

print("Grabbing submitter info...")

try:

output = json.loads(CallDeadlineCommand(["-prettyJSON", "-GetSubmissionInfo", "Pools", "Groups", "MaxPriority", "TaskLimit", "UserHomeDir", "RepoDir:submission/Houdini/Main", "RepoDir:submission/Integration/Main", "RepoDirNoCustom:draft", "RepoDirNoCustom:submission/Jigsaw", ]))

except:

print("Unable to get submitter info from Deadline:\n\n" + traceback.format_exc())

raise

if output["ok"]:

submissionInfo = output["result"]

hou.putenv("Deadline_Submission_Info", json.dumps(submissionInfo))

else:

print("DeadlineCommand returned a bad result and was unable to grab the submitter info.\n\n" + output["result"])

raise Exception(output["result"])

## Submit Render Job ##

try:

import SubmitHoudiniToDeadlineFunctions as SHTDFunctions

flag = 1

except Exception as e:

print(e)

hou.ui.displayMessage("ns_Pipe> Library import failure. Make sure you have a proper Deadline installation.")

if flag:

try:

jobProperties.update({"ns_pipe_hou_version": hou.getenv("_HIP_SAVEVERSION")})

jobProperties.update({"ns_pipe_image_out" : hou.parm(renderNode.path() + "/spare_output_image").eval()})

jobIds = SHTDFunctions.SubmitRenderJob_husk(renderNode, jobProperties, "")

except Exception as e:

print(e)

hou.ui.displayMessage("ns_Pipe> Can`t submitting to Deadline Repository.")

deadline_submitter_husk_task()IMPORTANT:

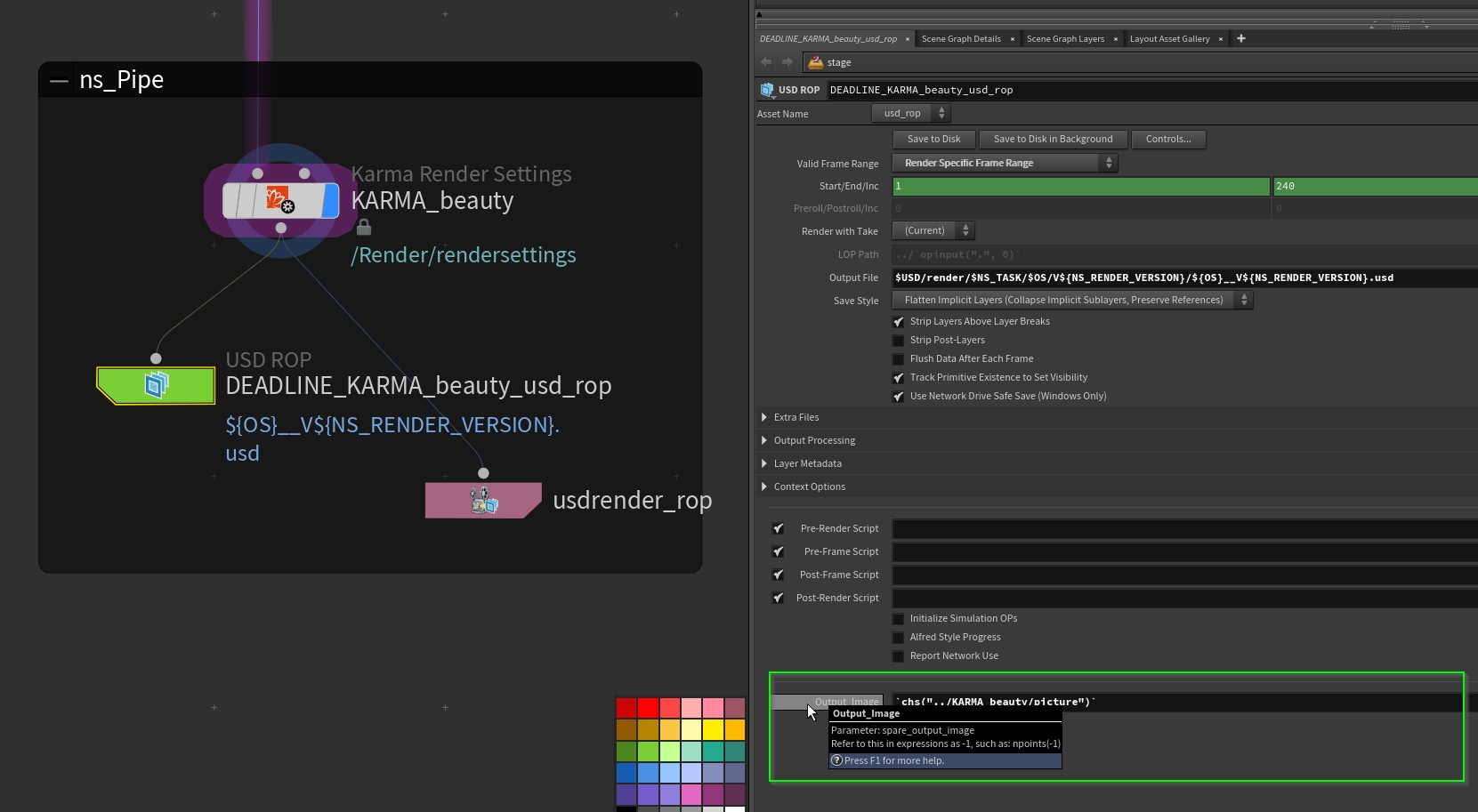

You need on your USD-ROP a string field where the script find the path for the image output. The string var field has to be named “spare_output_image”. I just referencing the path from the Karma Settings Node.

When you now trigger the script with selected USD-ROP node, you are able to send USD Husk Render jobs to Deadline. Modify it to your specific needs and build your own Deadline submitter.